Early Paradigms for Coding with Generative AI

Over the last few months Generative AI models, such as ChatGPT, GPT-4, Claude, and Bard, have radically changed my software development practices.

One of the most memorable tweets of the last year is by Andrej Karpathy: “The hottest new programming language is English”

In the face of a conversation that's increasingly centered around the potential for Large Language Models (LLMs) to revolutionize code development, my practical experience of using them has been a bit more nuanced. While I have no doubt that things will rapidly improve as models and tooling are further developed, current usage often requires us to adjust our traditional coding paradigms to better match the unique strengths and limitations of LLMs. We should remember, LLMs are powerful allies to developers, not replacements.

It’s hard to put together thoughts on AI-assisted coding as this is a rapidly evolving application but I have put together a few things I personally have found useful to think about. This article explores the practical applications of current LLMs in coding and the paradigms that can enhance their utility.

Please note that my perspective is rooted in my work in applied ML research, rather than in professional software development. As a result, your mileage may vary.

Best generative AI models and tools for coding:

I primarily use GPT-4 (through ChatGPT Plus1 or the API). I have also found Bard and Claude2 to be good. There are a lot of different opinions on this but generally these three are considered top contenders (LLM Leaderboard). It’s worth trying out a few of the top models to get a feeling for what the different offerings are like. I expect a lot of rapid innovation on the model side (especially with open-source models).

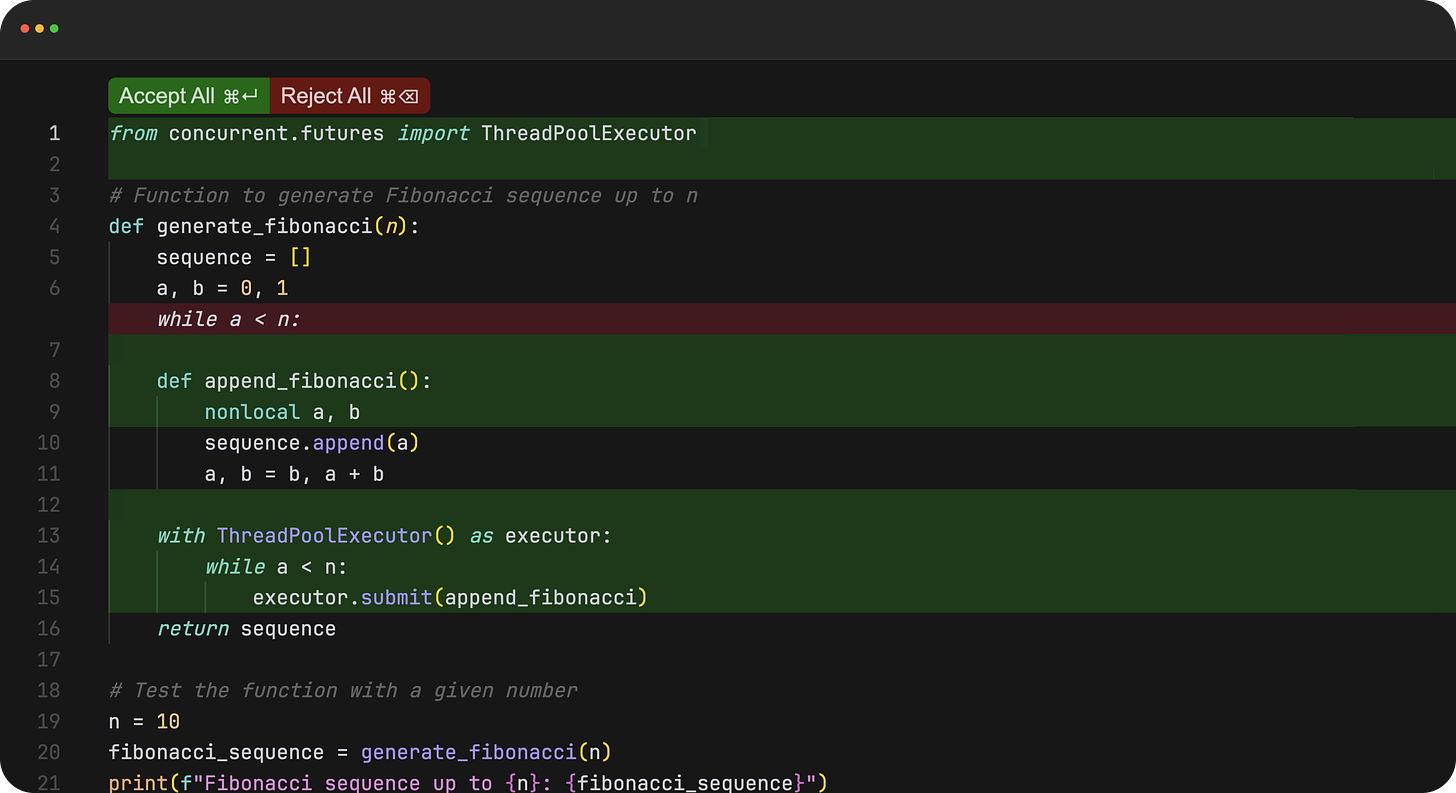

On the IDE side, the folks over at cursor.so have reimagined what an AI-first code editor should look like. They have a really unique UX where they offer “diff” style LLM-generated coding suggestions. I have found this to be a major upgrade from the autocomplete or chat-style interfaces. You can just plug in your preferred API key and get started. Cursor is still in its early days and they still have a long way to go before I can fully endorse switching over but I will definitely be keeping an eye on them.

Practical tips:

Break down your task: Work in small, discrete steps. Don’t ask the model to produce entire programs or scripts at once. Aim for iterative refinement instead of expecting a perfect solution in one go. Dissect your problem into smaller tasks and guide the model to solve each one individually.

Detail your requirements: A well-defined task often yields superior results. In my experience, the more specific and detailed you are about the task at hand, the better outcomes you can expect from the model.

Provide a blueprint: When feasible, give the model a high-level scaffolding or a general approach to solving the task.

Limit your interactions: Keep your conversation with ChatGPT within 3-4 messages. I've noticed a substantial drop in response quality beyond this point. If necessary, initiate a new discussion, while copying over the useful parts from the previous interaction.

Suggest specific packages/APIs: Interestingly, models often 'hallucinate' whole packages. When possible, determine the most appropriate packages or APIs yourself and guide the model accordingly.

Provide your Instructions first: According to OpenAI’s recent prompting guide, you should provide your instructions first and then provide additional context (like supporting code or API docs). The extra context can be separated using “###”. For example:

Modify this code to allow for asynchronous function calls using python’s asyncio module: ### Code ###Give plenty of context: Imagine you are assigning a task to a junior developer. What information would they need to accomplish the task, assuming they can handle the technical aspects. Providing this context to the model will enhance its performance.

Leverage Code TXT for small codebases: If you're working on a compact codebase, consider using Code TXT for GPT-4 Coding (https://github.com/petergpt/All-code-TXT-for-GPT-4-coding).

Utilize error messages for revisions: Feeding back error messages to the model can help refine its output. However, if the issue persists after 2-3 attempts, it’s probably not worthwhile to continue down the same path.

Always review the output: Always scrutinize the provided code snippets to ensure they meet your requirements and don't introduce bugs or security issues.

Employ pseudocode when stuck: If articulating your problem becomes challenging, resort to pseudocode. It can serve as an effective bridge for the model to understand the logic you wish to implement, thereby helping you code it out more accurately.

Basic applications of generative AI in coding

1. Writing Scripts

Generative AI models can assist in automating tasks, such as script writing. For example: “Given a folder full of video files, write a script that extracts a frame every 5 seconds and saves them into a directory. To ensure proper organization, the frames from each video can be stored in their own folders named based on the originating video file”.

2. Writing Unit Tests

Writing unit tests with AI can be a little tricky, as it may lead to a false sense of security. Sometimes, AI models may write tangential or overly basic tests. Hence, it's essential to be specific about what the tests should cover rather than just instructing the AI to "Write some unit tests for this function."

3. Organization and Infrastructure

One of the largest benefits of Generative AI coding assistants in my work has come from my improved organization and ability to finally do things the “right way”. As a researcher, there are certain parts of software development that I have always dreaded like creating docker containers. LLMs have been amazing at automating all the things that I know I should be doing but sometimes get lazy to do.

4. Code Refactoring

I often use LLM to make the code I have already written more efficient, cleaner, and easier to understand. By providing the existing code and clear instructions about what needs to be improved, such as reducing the code's complexity or increasing its modularity, you can often get good results.

5. Simple Error Debugging

LLMs are fantastic at reading error messages. For simple problems, you can often give them code snippets and the full stack trace to get better and faster results than Stackoverflow.

Coding paradigms for more advanced use

The efficacy of generative AI models in coding can be substantially improved by adhering to certain coding paradigms, such as code modularity and the SOLID Principles.

Modularity and SOLID Principles

Modularity involves breaking down your application into smaller, independent units of code. These can function independently of others, making it easier for AI models to work within the context. This approach ensures each module and its context can be kept under the AI model's token limit, like the 8000 token limit of GPT-4.

The SOLID principles offer a strong foundation for AI-assisted development:

Single Responsibility Principle: Each module should serve a single purpose or responsibility. This helps ensure that modules remain within the token limit of the model.

Open-Closed Principle: Modules should be open for extension but closed for modification. Design interfaces that allow the addition of new functionalities without modifying the existing code. By providing hooks or extension points, the AI can add functionalities without understanding or modifying the existing code.

Liskov Substitution Principle: When creating a new module that extends or depends on an existing one, ensure the new module is a proper subtype of the existing one. Defining the behavior of the existing module helps the AI generate code for the new module that complies with the behavioral constraints.

Interface Segregation Principle: Design small, specific interfaces instead of larger, general-purpose ones. This reduces the complexity that the AI has to handle and ensures that the generated code doesn't have unnecessary dependencies.

Dependency Inversion Principle: Modules should interact through abstract interfaces instead of direct code interaction. This reduces the context the AI needs to generate code, making the code more modular and easier to maintain.

(Good video on SOLID with much more detail here)

Multi-scale Interface Map

Maintaining a text mapping that defines the inputs and outputs between various components at multiple scales can greatly assist in providing the AI with the necessary context. For instance, one map may describe the input/outputs of each function in your class, while a higher-level map may outline how classes interact with each other.

For example, a lower-level map could just be function definitions with doc strings:

class User:

def create_account(self, username, password):

"""

Input: username (string), password (string)

Output: User object

"""

def login(self, username, password):

"""

Input: username (string), password (string)

Output: Login confirmation message

"""

def delete_account(self, username):

"""

Input: username (string)

Output: Deletion confirmation message

"""

Higher-level maps could describe the interface between different classes:

User -> Post:

A User can create_post, edit_post, and delete_post.

create_post takes a title and content and returns a Post object.

edit_post takes a post_id, new_title, and new_content and returns an updated Post object.

delete_post takes a post_id and returns a confirmation message.

User -> Comment:

A User can add_comment and delete_comment.

add_comment takes a post_id and content and returns a Comment object.

delete_comment takes a comment_id and returns a confirmation message.

Post -> Comment:

A Post can get_comments.

get_comments takes no input and returns a list of Comment objects.

Maintaining such text-based descriptions of your program/module allows you to quickly give the model relevant context for your task. Until better tools are developed this can be the difference between LLMs being helpful to your project or being a waste of time.

Dialogue-Driven Development

This is an interaction model where the programmer asks the AI to perform high-level coding tasks, and the LLM asks questions until it has enough context to complete the task.

A typical interaction could be as follows:

Developer: "Create a class named 'Car' with properties for 'color', 'make', 'model', and 'year'."

AI: "Sure, do you want these properties to be private or public?"

Developer: "Make them private and provide getter and setter methods for each."

An interesting (but still fairly limited) example of this is GPTEngineer.

I haven’t had much success with the dialogue approach using the current generation of models. I often find that they ask a list of relevant questions but not always the most important ones. However, I am excited by the future potential of dialogue-driven development and expect rapid improvement in this interaction model in the next few years.

Ending thoughts

In conclusion, I find myself fascinated, not so much by the pure technical prowess of these Generative AI models, but by the shift in mindset they invite us to make. At their core, these tools challenge us to rethink our coding practices, recalibrate our expectations, and repurpose our skills to engage with coding in a fundamentally novel way.

While the potential is undoubtedly vast, we need a grounded and pragmatic approach toward AI-assisted coding. It's important to remember that these tools are still limited and are not magic wands that instantly solve all coding challenges. With clear intentions, thought-out strategies, and careful application, we can work collaboratively with these AI models to foster a more efficient and enriching coding environment.

Other great articles on the topic:

https://ai.plainenglish.io/coding-with-chatgpt-gpt-3-5-and-gpt-4-a-quick-guide-7ea32a6a6e0

https://towardsdatascience.com/3-great-ways-use-chatgpt-gpt-4-better-coding-7fb94e86be3e

https://www.allendowney.com/blog/2023/04/02/llm-assisted-programming

Get emails about new posts:

If you liked this article consider joining for email notifications of new posts:

Office hours:

I hold weekly consulting office hours for researchers, startups, and businesses trying to leverage generative AI. If you are interested shoot me a message on LinkedIn.

Note on GPT-4 via ChatGPT Plus vs. AI

Although it is not clear to me, there has been some anecdotal discussion around GPT-4 via the ChatGPT Plus interface performing worse at coding (due to maybe being replaced by a quantized or distilled version). Some claim the API has remained unchanged.

Note on Calude’s Context Window

I have been trying out Claude Instant’s 100k context window and am pretty impressed. It definitely unlocks some previously unavailable coding use cases. However, its overall coding competency seems to be lower than GPT-4. I usually find with multi-scale interface maps and good modularity I can get better results with more powerful models with smaller context windows.

Nice guide thanks.

Mind the reason why you must keep the conversation short is the token window. ChatGPT is just a front-end which forwards to GPT the last user prompt plus the previous interactions that fit within the model token window. The remaining messages are silently discarded as no data aggregation is performed.

FYI there seems a bug in the edit comment function, whereas edited comments temporarily disappear until the page is reloaded.